Show hn velda run has emerged as a groundbreaking development in the landscape of computational workflows, especially for organizations and individual developers aiming to leverage GPU-powered tasks without the overhead of traditional containerization. As 2026 unfolds, Velda’s innovative approach to serverless GPU job execution is redefining how data-intensive projects are managed, executed, and optimized for efficiency and cost.

Velda: How to Run GPU-Accelerated Jobs Serverlessly Without Containers in 2026

The evolution of cloud computing continually pushes the boundaries of flexibility and performance. Velda stands out in this milieu by offering a platform that simplifies GPU-accelerated jobs, eliminating reliance on containers, and providing a truly serverless experience. This article explores Velda’s capabilities, its strategic advantages, and how developers and businesses can leverage show hn velda run to optimize their workflows in 2026 and beyond.

Key Takeaways

Table of Contents

Introduction to Velda and show hn velda run

Show hn velda run has captured the attention of the developer community and enterprise stakeholders alike by offering a novel approach to executing GPU-intensive workloads. Traditionally, running GPU jobs required complex setups involving container orchestration, virtual machines, or dedicated hardware pools. Velda simplifies this process by providing a serverless platform that abstracts away the underlying infrastructure, allowing users to focus on their computational tasks rather than deployment logistics.

This shift towards serverless GPU jobs aligns with broader trends in cloud-native computing, where reducing operational overhead and increasing flexibility are top priorities. velda’s approach addresses common pain points such as resource provisioning delays, dependency management, and scalability hurdles. In 2026, show hn velda run exemplifies how innovative cloud services are transforming high-performance computing (HPC), especially for AI, machine learning, and data analytics tasks.

By eliminating the containerization layer, Velda reduces complexity, accelerates deployment times, and offers an intuitive interface for managing GPU workflows. For developers experimenting with neural networks or data scientists executing large models, velda’s serverless architecture provides both speed and scalability. Moreover, velda integrates with existing tools like project management software and browser extensions, creating a seamless environment for productivity and collaboration.

What is Velda?

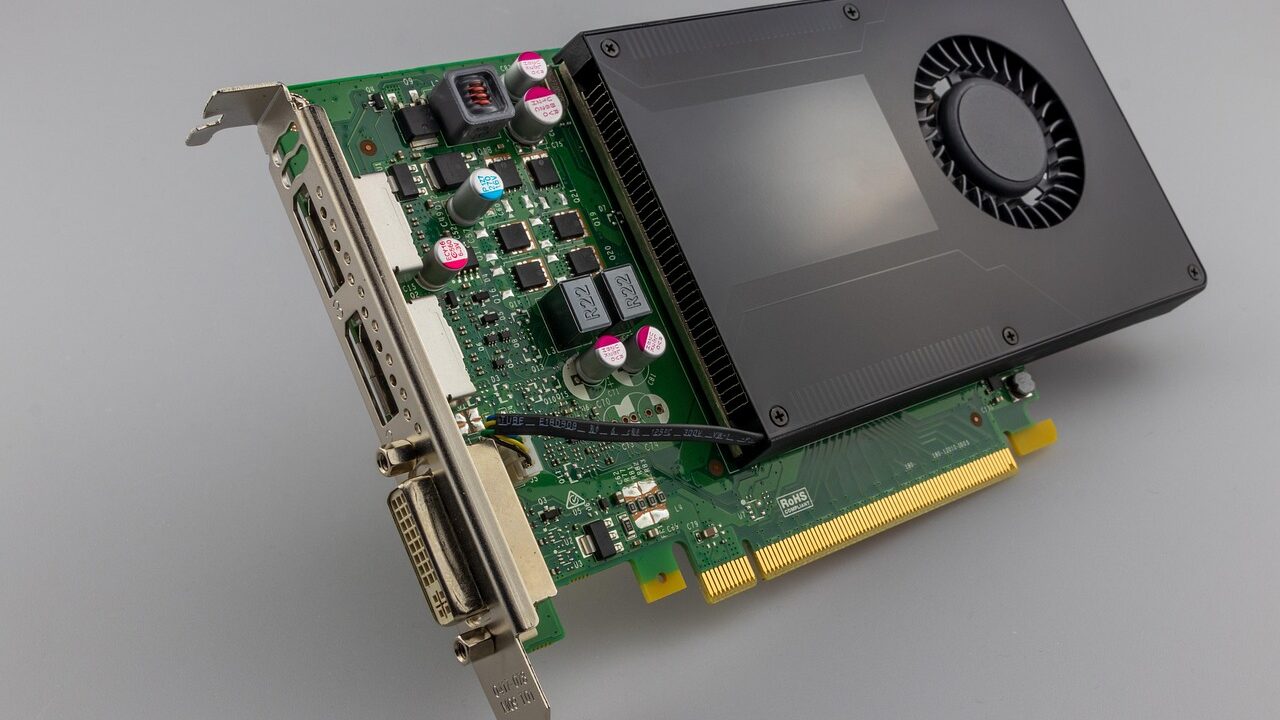

Velda is a cloud-based platform designed to facilitate GPU-accelerated job execution without the need for containers or dedicated hardware. It leverages a serverless architecture that dynamically allocates GPU resources based on workload demands. In practice, velda enables developers to submit computational jobs via simple APIs or command-line tools, with the platform managing resource provisioning, execution, and cleanup transparently.

The core philosophy of Velda is to democratize access to GPU computing, making it accessible to a broader range of users—from individual developers to large enterprises—without requiring extensive infrastructure knowledge. As a SaaS platform, Velda offers pay-as-you-go pricing, which aligns with modern software consumption models and reduces upfront costs.

Its architecture is built upon a cloud-native design that ensures high availability, fault tolerance, and security. Velda’s backend orchestrates GPU resource allocation across multiple cloud providers, optimizing for latency and cost efficiency. This hybrid approach allows users to run complex jobs faster and more reliably than traditional on-premises setups or container-based solutions.

Core Features of Velda in 2026

1. Serverless GPU Job Management

The flagship feature of Velda is its ability to run GPU-accelerated jobs serverlessly. Users submit tasks through an API, CLI, or web interface, and Velda automatically provisions GPU resources as needed. This removes the need for manual server configuration, container setup, or cluster management.

Velda’s intelligent scheduler balances workload across cloud regions and GPU types, ensuring optimal performance. It also supports pre-emptible instances for cost savings, and users can set priority levels for different jobs. The platform provides real-time monitoring and logs, making it easier to troubleshoot and optimize performance.

This model significantly shortens deployment cycles, reduces infrastructure costs, and allows teams to iterate faster on data science projects or AI experiments.

2. Compatibility Without Containers

Unlike many GPU cloud services that depend heavily on Docker containers or Kubernetes, Velda operates independently of such frameworks. It allows users to run jobs directly from scripts, command-line commands, or through API calls, with the platform handling dependencies internally.

This approach is particularly advantageous for teams facing complex dependency chains or working with legacy codebases that aren’t container-friendly. Velda’s environment supports multiple frameworks, including TensorFlow, PyTorch, and CUDA, among others, without requiring users to bundle their code into containers.

By removing containers from the equation, Velda accelerates deployment times, reduces configuration errors, and simplifies workflows—especially for fast-paced research environments or tight project deadlines.

3. Seamless Integration with Workflow Tools

Velda integrates smoothly with popular project management software, code repositories, and development environments. Whether connected through REST APIs, browser extensions, or plug-ins, velda enhances existing workflows without disruption.

For example, developers can trigger GPU jobs directly from Jira tickets, Slack commands, or Visual Studio Code extensions. This integration streamlines project tracking, collaboration, and code testing, enabling teams to execute compute-heavy tasks within their usual software ecosystem.

Additionally, Velda supports data transfer and version control integrations, ensuring that input datasets and model artifacts are synchronized across platforms.

Deployment Strategies and Practical Use Cases

1. Accelerating Machine Learning Model Training

One of the primary use cases for velda is accelerating machine learning workflows. Data scientists can submit training jobs directly via API from Jupyter notebooks or IDEs, without worrying about managing GPU instances or dependencies.

Velda’s dynamic resource allocation ensures that training jobs are scaled appropriately, which can dramatically decrease training times. In practice, teams can experiment with different models or hyperparameters more rapidly, reducing time-to-market for AI solutions.

Organizations adopting velda for ML training often report significant cost savings compared to traditional GPU clusters, especially when leveraging preemptible instances during low-demand periods.

2. Running Data-Intensive Simulations

Researchers in physics, chemistry, and engineering increasingly rely on GPU-accelerated simulations. Velda makes it easier to run complex simulations without setting up dedicated hardware or managing containers, thus broadening access to high-performance computing.

Through Velda, users can submit simulation jobs from analytical pipelines or workflow automation tools, with results delivered directly to data lakes or dashboards. This approach supports iterative research and collaborative projects where flexibility and speed are paramount.

Moreover, Velda’s support for multiple GPU types allows optimization based on the nature of simulation tasks, whether they require high memory bandwidth or a large number of cores.

3. Automated Video Processing and Rendering

Media companies and creative teams leverage GPU acceleration for video rendering, editing, and processing workflows. Velda enables them to offload these resource-intensive tasks into a serverless environment, significantly reducing turnaround times.

Content creators can trigger rendering jobs via browser extensions or API calls, integrating Velda into their existing content pipelines. The platform manages resource provisioning automatically, ensuring that even high-resolution projects can be rendered in a fraction of the time traditional setups require.

This use case exemplifies Velda’s flexibility, supporting both batch processing and real-time workflows, essential for media production pipelines.

Integration with Existing Business Software and Workflow Tools

1. Connecting Velda with Project Management Software

Connecting velda with project management platforms like Asana, Jira, or Trello enhances tracking and collaboration. Automated triggers can be configured so that when a task reaches a specific stage, velda automatically starts the related GPU job.

This setup minimizes manual intervention, reduces errors, and accelerates project timelines. For instance, when a data scientist marks a model as ready for training, velda can automatically allocate resources and initiate the training process.

Such integrations foster a seamless flow from planning to execution, ensuring that high-performance computing is embedded into everyday project workflows.

2. Browser Extensions for Easy Job Submission

Browser extensions compatible with Chrome and Firefox provide quick access to velda’s GPU job submission features. Users can select datasets, scripts, or models directly from their browser, triggering velda jobs with a single click.

This is especially useful for teams working across multiple platforms or for rapid prototyping. With a simple interface overlay, users avoid switching contexts or entering lengthy command-line commands, streamlining the entire process.

Browser extensions also facilitate monitoring job status, downloading logs, or adjusting parameters, creating a user-friendly ecosystem for non-technical stakeholders involved in AI or data projects.

3. SaaS Tools Review and Compatibility

Velda integrates well with popular SaaS tools used for data management, analytics, and visualization. It connects with cloud storage services such as Dropbox, Google Drive, or OneDrive, ensuring easy data ingestion and output retrieval.

Additionally, Velda can synchronize with BI tools like Tableau or Power BI to visualize real-time results from GPU-accelerated runs. The platform supports a broad spectrum of API integrations, making it adaptable to existing enterprise architectures.

This compatibility reduces friction for organizations adopting velda, allowing them to incorporate GPU-accelerated processing into their broader SaaS tool reviews and workflows.

Velda vs. Traditional SaaS Tools and Software Comparison

1. Performance and Cost Efficiency

Compared to conventional SaaS solutions like AWS EC2 GPU instances or Google Cloud GPU offerings, velda’s serverless model often results in better utilization and cost savings. Its dynamic provisioning ensures that resources are allocated only when needed, avoiding idle time and associated expenses.

Performance-wise, Velda’s optimized scheduling and workload distribution can outperform static configurations, especially for variable workloads needing rapid scaling. Clients report reduced training and processing times, directly translating into increased productivity.

Choosing velda over traditional services involves assessing trade-offs: while it simplifies management and offers flexibility, it may lack some specialized control features found in dedicated cloud GPU services. However, for most use cases, velda’s automation and ease of use outweigh these considerations.

2. Ease of Use and Adoption Curve

Velda’s API-centric design and minimal configuration requirements enable faster onboarding compared to platforms that depend on container orchestration or complex setup procedures. This ease of use accelerates adoption, especially in environments where technical staff may not specialize in HPC infrastructure.

Traditional SaaS platforms might offer a more mature feature set for specific applications, but velda’s flexibility and serverless approach make it a compelling choice for innovative workflows and rapid experimentation.

Organizations considering velda should evaluate their existing workflows, dependency chains, and team expertise to determine the best fit. Often, the benefits of simplified deployment and management outweigh the learning curve associated with new platform adoption.

3. Security and Compliance

Security remains a paramount concern for enterprise users. Velda incorporates industry-standard encryption, access controls, and audit logging to meet compliance requirements. Its architecture supports multi-region deployments to address data residency concerns.

Compared with traditional SaaS tools that may be more established, Velda’s security features are designed to integrate with enterprise security policies, including single sign-on (SSO) and role-based access control (RBAC).

Careful evaluation of compliance frameworks relevant to specific industries (healthcare, finance, government) is essential before adopting velda, but its architecture is designed to support these standards effectively.

Future Trends and Adoption Considerations

1. Increasing Adoption of Serverless Architectures

2026 is witnessing a significant shift toward serverless computing, driven by the desire for scalability, cost reduction, and operational simplicity. Velda exemplifies this trend for GPU workloads, providing a blueprint for future high-performance SaaS developments.

As cloud providers and third-party platforms enhance their serverless offerings, velda’s model is likely to evolve, incorporating more automation, multi-cloud compatibility, and AI-driven management features.

Organizations should monitor these developments to stay ahead in adopting flexible computing solutions that align with their strategic goals.

2. Decentralization and Edge Computing

While Velda currently operates predominantly in cloud data centers, future iterations could extend to edge computing environments, enabling low-latency processing closer to data sources. This would be especially relevant for IoT applications, autonomous systems, and real-time analytics.

Decentralized GPU processing can reduce bandwidth costs and improve responsiveness, opening new avenues for Velda’s architecture in specialized industries.

Understanding the interplay between centralized cloud services and edge deployments will be crucial for organizations planning long-term infrastructure strategies.

3. Integration with AI and Automation Tools

The integration of Velda with AI orchestration platforms and automation frameworks could further streamline complex workflows. Automated job scheduling, dependency management, and cost optimization driven by machine learning models will make GPU-accelerated tasks more intelligent and autonomous.

This evolution will enhance Velda’s value proposition, making it an integral part of AI-driven enterprise ecosystems.

Conclusion

Show hn velda run signifies a pivotal advance in the realm of GPU computing, delivering a serverless, containerless experience that simplifies high-performance tasks. By harnessing Velda’s capabilities, developers and organizations can reduce operational complexity, accelerate project timelines, and improve cost efficiency.

Its seamless integration with existing workflow tools, compatibility with SaaS platforms, and adaptability for a wide range of applications position Velda as a compelling choice in 2026’s software landscape. As adoption grows and new features emerge, Velda’s approach may well set the standard for future GPU-accelerated cloud services.

For further insights into emerging SaaS tools and performance optimization strategies, consult industry reviews such as those found at PCMag. Organizations aiming to stay at the forefront of technological innovation should consider how Velda’s serverless GPU execution can enhance their project management and data processing workflows in the coming years.

Choosing show hn velda run requires evaluating your project’s specific needs, infrastructure compatibility, and security considerations. However, its promise of simplified, scalable, and cost-effective GPU workloads makes it a noteworthy addition to the suite of business software 2025 and beyond.

Leveraging Advanced Frameworks for Optimal GPU Utilization

As GPU-accelerated workloads become increasingly complex, integrating Velda with advanced frameworks such as TensorFlow, PyTorch, and JAX can significantly elevate performance and flexibility. Velda’s architecture is designed to seamlessly interface with these frameworks, enabling developers to run large-scale training and inference jobs serverlessly without the overhead of containerization.

For instance, when deploying PyTorch models, Velda allows direct access to GPU resources through custom runtime environments, bypassing traditional container layers. This approach reduces latency and maximizes throughput, especially in scenarios involving distributed training or hyperparameter tuning. Additionally, Velda supports dynamic resource allocation, allowing workloads to scale based on real-time demands, which is critical when working with large models like GPT or vision transformers.

To further optimize, developers can utilize Velda’s built-in support for mixed-precision training and gradient checkpointing, reducing memory footprint and accelerating computation. These tactics are especially valuable in reducing costs while maintaining high fidelity in model training. The combination of Velda’s serverless paradigm with these frameworks creates a robust platform for end-to-end AI workflows, from data preprocessing to deployment, without the need for managing complex orchestration containers.

Handling Failure Modes and Ensuring Resilience in GPU-Accelerated Serverless Jobs

While Velda’s architecture is designed for high reliability, understanding potential failure modes is essential for maintaining robust GPU-accelerated workloads. Common issues include transient GPU failures, network disruptions, and resource contention. Velda incorporates several resilience strategies to mitigate these risks, ensuring seamless job execution even in adverse conditions.

One key tactic involves automatic job checkpointing. Velda periodically saves intermediate states of computations, enabling jobs to resume from the last checkpoint in case of failures. This approach minimizes recomputation and reduces downtime. Additionally, Velda employs intelligent job retries with exponential backoff, which helps recover from transient GPU errors or network hiccups without manual intervention.

Monitoring and diagnostics are integral to preemptively identifying issues. Velda provides detailed metrics on GPU utilization, error logs, and performance bottlenecks through its integrated dashboard. By analyzing these metrics, users can fine-tune their workloads, for example, by adjusting batch sizes or optimizing data pipelines. Furthermore, Velda’s architecture supports multi-region deployments, allowing workloads to migrate seamlessly across data centers in case of localized failures, thus ensuring high availability.

Optimizing failure handling also involves understanding workload-specific failure modes. For example, deep learning training jobs might encounter out-of-memory errors; in such cases, Velda’s automatic resource scaling and memory management policies dynamically allocate additional GPU memory or repartition datasets. This proactive approach ensures continuous progress with minimal manual intervention, ultimately delivering a resilient, serverless GPU compute environment for demanding AI workloads.

Performance Tuning and Cost Optimization Strategies in Velda

Maximizing the efficiency of GPU-accelerated serverless jobs requires precise performance tuning and cost-awareness. Velda offers a suite of tools and best practices to help users optimize both computational throughput and expenditure. One of the fundamental tactics involves leveraging Velda’s intelligent scheduling algorithms, which prioritize jobs based on resource requirements and urgency, ensuring optimal use of available GPU resources.

For fine-grained performance tuning, Velda supports workload profiling, enabling users to identify bottlenecks such as data transfer latency, suboptimal kernel launches, or inefficient memory usage. Using these insights, developers can optimize data pipelines—employing techniques like data prefetching, asynchronous data loading, and in-memory caching—to reduce idle GPU time. Additionally, Velda’s support for mixed-precision computation allows models to run faster and consume less memory, translating into cost savings without sacrificing accuracy.

Cost control is further enhanced through dynamic resource provisioning. Velda automatically adjusts GPU allocation based on workload demands, scaling resources up during intensive training phases and down during idle periods. Moreover, Velda’s billing model encourages efficient job design; for example, batching multiple inference requests together can significantly reduce per-request costs, especially in high-throughput inference scenarios.

Advanced users can implement custom optimization strategies by integrating Velda with workload orchestration frameworks such as Ray or Dask. These frameworks facilitate task parallelism and workload distribution, enabling more granular control over GPU utilization. Also, employing techniques like gradient accumulation can allow large models to be trained with smaller batch sizes, reducing GPU memory requirements and lowering costs.

Finally, Velda’s analytics dashboard provides detailed cost breakdowns and performance metrics, empowering teams to make informed decisions about resource allocation, job scheduling, and algorithmic adjustments. By combining these strategies, organizations can achieve a harmonious balance between performance excellence and cost efficiency, capitalizing on Velda’s serverless GPU capabilities to stay agile and competitive in 2026’s AI landscape.

Community and Ecosystem Integration: Show HN Velda Run

As Velda matures into a comprehensive platform, fostering a vibrant community around it becomes essential. The command ‘show hn velda run’ has gained popularity among early adopters sharing their experiences, workflows, and optimization tips. This community-driven approach accelerates innovation, provides real-world validation, and offers valuable feedback that shapes Velda’s future features.

Developers and organizations frequently exchange best practices on platforms like GitHub, Reddit, and specialized forums, discussing topics ranging from advanced debugging techniques to best ways to integrate Velda with existing machine learning pipelines. These discussions often include concrete examples, such as optimizing multi-GPU distributed training or implementing custom failure recovery strategies—knowledge that accelerates adoption and troubleshooting for newcomers.

The Velda ecosystem is also expanding through integrations with popular ML toolkits, data management systems, and orchestration frameworks. For example, support for MLflow enables seamless experiment tracking and reproducibility, while integration with Apache Spark facilitates large-scale data preprocessing. Additionally, Velda’s open APIs encourage third-party extensions, fostering innovation and customization tailored to specific industry needs.

Community events, webinars, and hackathons under the banner of ‘show hn velda run’ promote collaborative learning and showcase cutting-edge use cases. These initiatives help demystify GPU serverless deployments, inspire new applications, and cultivate a network of practitioners dedicated to pushing the boundaries of what Velda can achieve. In this way, Velda not only serves as a tool but also as a vibrant ecosystem driving advancements in AI infrastructure.

Looking ahead, the Velda team plans to release enhanced SDKs and CLI tools, simplifying deployment workflows and enabling more automation. The community’s active involvement ensures these features address real-world needs and foster a culture of shared growth. Ultimately, the collective effort around Velda accelerates the transition toward fully serverless, GPU-accelerated AI workloads that are resilient, efficient, and accessible across diverse industries.